A Complex but Interesting Relation: Keynes, Mathematics, and StatisticsAbstract

The objective of this methodological paper is to examine a historical milestone in economic method to aid to detect how economics is done today. The chosen author is Keynes and the selected methodological theme is the role of mathematics-statistics in economics. Keynes´s relationship with mathematics and statistics was always complex. His organicist notions aided him to denounce what he saw as wrong, and then he accepted some practical uses of his theories. The introduction describes Keynes’s route towards his stance on the use of mathematics, the background of classical probability and certainty, and the intellectual stance of Keynes the statistical. Section 1 explains how Keynes influenced the development of national accounts and econometrics, rejecting since the beginning any manifestation of unrestricted faith in the latter, including his debates. Section 2 outlines how he built a separate notion of probability, moving away from the orthodox conceptions. Keynes saw probability as an objective relation between two statements with the weight of the argument at the core of his argumentation. Section 3 describes the relation between Keynes and Ramsey who influenced Keynes conception of probability as Maynard moved away from crass objectivism to logical objectivism and a partial subjectivism. The conclusion is that Keynes preached a rational use of mathematics-statistics. Like in the cases of capitalism or economic theorizing, he denounced what he advocated in order to improve it. Perhaps the originality of this article lies in the philosophical perspectives resorted to for illuminating Keynes’s controversies. A parallel practical purpose is to highlight Keynes’s methodological insight: No researcher must take any method before being aware of its strengths and limitations. JEL: A1, A12, B00, B16, C00 Keywords: Keynes, Ramsey, probability, the weight of the argument, uncertainty, mathematics, statistics, econometrics. Introduction

Keynes was an economist generating novel notions in the fields of both mathematics and statistics, especially about their uses in economics. Hence this paper deals with Keynes’s s guidelines for the use of quantitative methods which is based on his philosophical core, after outlining his historical background, which is the purpose of this Section.

Keynes’s route to mathematics Keynes was an acute and innovative political and moral philosopher between 1899 and 1919. He wrote several epistemological pieces during that period, outstanding amongst them A Treatise on Probability (1908) [1921]. Between 1919 and 1930 he was a top-level civil servant in both the war and postwar realms in Britain, although he found the time to write the non-mathematical A Tract on Monetary Reform (1923). In 1930 Keynes wrote A Treatise on Money making use of two fundamental equations to describe the ways to arrive at an equilibrium level in both the price level and earnings, but he soon discarded them since output was still a constant. He needed a more qualitative approach. In 1940 Keynes penned the influential book (for our purposes) How to Pay for the War (1940), in which he discussed the basics of national accounts, originally set in the preface of the General Theory of Employment, Interest and Money or GT (1936). Thereafter Keynes got involved in controversies about the uses of mathematics and statistics in economic analysis and policies, oftentimes preferring a qualitative approach. The context of Classical probability Classical literature on probability reigned when Keynes appeared. The classical and the frequentist statisticians belong to the objectivist vision of probability, whereas Keynes defended an special objective discrete version of logical – not mathematical – probability, which also possesses subjective elements, since logics proceeds from human cognition.

Keynes may have also embraced the conception of uncertainty, which was enunciated by Heisenberg (1901-1976) in quantum physics in 1927. The indeterminacy principle set limits to precision in knowledge wherein errors are non-systematic1.

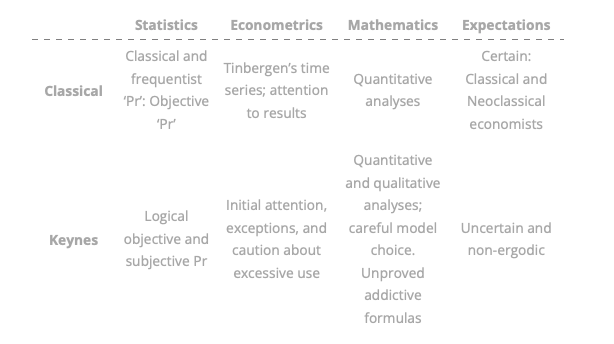

Keynes’s experience on uncertainty came from both intuition and his professional practice. Keynes the practitioner in the 1930s applied the notion of uncertainty to macroeconomic and financial events. Without uncertainty nothing would be at stake in financial markets. Short-term investments and policies are uncertain but manageable. Keynes contradictorily recommended long-period investments but not policies. Table 1 is a summary of the intellectual context of Keynes’s stance on empirical measurement, providing the background to all approaches to be explained. Table 1: Context of ideas Source: Author’s elaboration.

A) Keynes’s stance in debates The classical notion of probability is objective in an infinite sample. All events have the same probability to occur unless something is defective. For instant, the cardinal probability of obtaining a six after throwing a dice is 1/6. This limpidness however, only occurs in pure mathematics or perhaps in physical atomistic phenomena, wherein knowledge reveals nothing and no human action is conducive.

In contrast, Keynes adopted in the “Adding-up problem” (1904) the doctrine of organic units as outlined by G. E. Moore, who in turn took this conception from Hegel. An organic unity is one wherein the whole is different from the sum of its parts. Moore said that good is indefinable. Keynes then tried to sum goodness by adding up individual goods. This meant a rejection of methodological individualism. Keynes thus enunciated such macroeconomics principles as the fallacy of composition, which allows room for uncertainty (1936). The formula

only holds in special cases.

In “The principles of probability” (1908), Keynes attempted to extend the reach of logical argument by including those cases in which the conclusion is partly entailed by the premises. He also made an effort to align probability to ordinary discourse (to get the problem involved into a practical course of action). In addition, real evidence is not identical to conclusive evidence. Either cogency or irrelevance differs from the dichotomy proof-not proof. The core of these insights is that probability is indefinable, but objective in a finite sample which allows room for decision making. If the game is about choice, the conception of the weight of the argument arises. The weight of evidence is the rational tenet which makes people apt to decide when to stop the process of acquiring information. This means that numbers do not prove by themselves the veracity of a statement. In A Treatise on Probability (1921) Keynes set the foundations of statistical inference in an unconventional manner. Probability is for him a logical ordinal concept about propositions between enunciates, which are objective and subjective. He urged to no convert probabilities into numerical probabilities, at least not all of them. No mathematical expectations are valid, whatever, since the future is not a continuation of the past. Probability is thus akin to similarity, becoming the foundation of radical uncertainty.

The notion of evidential weight was applicable to investment behaviors in Chapter 12 of GT. Both intuition and the adequate processing of information are relevant for taking decisions. It also has to do with both moral risk and rational judgment on conduct. Rod O’Donnell (19892) and Athol Fitzgibbons (19883), among others, consider that Keynes’s approach to probability sets the behavioral, epistemological and ontological bases of the GT.

Our hypothesis is that, like in the case of capitalism or economic theories, Keynes denounced what he advocated in order to improve it. Section 1 deepens Keynes’s insights on improvement. Keynes’s Empiricism: National Accounts and View of Econometrics Some heuristic constructs of the Classical Economy have been criticized by many authors for being too abstract, especially those related to micro-demand theory. Conversely, Keynes’s reputation partly rests on the operationality of his macro-models. In particular, he made use of two quantitative targets for conducting empirical4 investigation: national accounts, and econometrics and its debates. This analysis is the purpose of Section 1. In an Appendix to How to Pay for the War (1940), set the bases for undertaking a numerical account of its elements: consumption, investment, public expenditure, exports and imports as well as savings. These accounting models still dominate income determination and development policies in empirical macroeconomics. According to Tily (20095), in terms of national accounts, Keynes was a “theoretician, compiler, supporter and user” (Tily, 2009, Abstract). The development of national accounts was further advanced by Colin Clarke (1905-1989), Simon Kuznets (1901-1988), James Meade (1907-1995) and Richard Stone (1913-1991), mainly in the late 1920s and the 1930s. Keynes collaborated with Clarke in the early 1930s. The first system of national accounts functions was that of the United States, being in operation since 1947. Keynes also left his imprint on the design of public budget statements. Uncertainty does not appear as an item in national accounts, but perhaps it may be measured in future interest rates or exchange rates, bearing in mind that national accounts stimulate the compilation of data on financial and socio-economic variables. Realism and Econometrics Keynes firstly criticized the pioneering models of Jan Tinbergen (1903-1994) in 19396. He subsequently welcomed the use of econometrics for treating variables included in national accounts. This was especially true from 1943 on, when he moved from philosophy to expediency. This type of research emerged from the theoretical scheme offered in GT about the components of aggregate demand or output. However, Keynes was methodologically opposed to econometrics because he believed in organic unities and in historical time –as opposed to logical time. Keynes’s critique is about the stability of the representative equations throughout both time and space. Other problems are those related to the selection of variables or their manipulation. He contended that a previous analysis of circumstances must be conducted. Keynes maintained that induction is always difficult for justifying his aversion to the plain use of econometrics. His critique was that the behavior of variables throughout sub-periods is neither uniform nor homogeneous7. Keynes was concerned about the road not only about the end. For him, human and social behaviors are mostly discontinuous and asymmetrical. Hence, Keynes rejected the unexamined use of mathematics and statistics for explanatory purposes. But he never supported the ruling out of econometrics. Shackle backed Keynes by stating that ignorance of the true Gaussian probability distribution might prevent economists from gaining knowledge in a dynamic environment. In this sense, both classical and frequentist probabilities are consistent with certainty. What Keynes rejected the most was the use of mathematical formalism. Formalism may be related to the notion of economics as a set of atomistic mathematical formulas. Keynes argued that neither atomic nor closed systems exist in economies. He stated that accurate predictions cannot be the outcome of models based on Classical probabilities (TP, Chapter 5), certainty (TP, Chapter 8). Econometrics measures the extent of dependence between explanatory and dependent variables under the assumption that certainty is useful for prediction. However, as the world is continuously changing, risk is not useful. The anti-priori econometrician opposed the automatic assumption that Y is a function of X without previously resorting to economic theory or to plain facts. This stance was also related to the choice of contextual narratives over logical economic interpretations. The debates The Keynes-Tinbergen debate occurred between 1938 and 1940. Keynes ascertained there that results have little value since methods must be tested beforehand. Tinbergen answered in a Friedmanite8 style that results are the ‘definitive’ proof. Keynes also conducted a critique of econometrics not only in terms of the assumption of independence between two variables but also wondering why the relationship between X and Y must be linear. Keynes evaluated the twin assumptions of homogeneity in variables (whether X and Y are comparable) and their synchronized movements (whether ΔX is comparable to ΔY). This also was a critique of Walras’s economics. There systems of linear equations about n markets explained by n variables provide results without considering uncertainty. Moreover, it was necessary according to Keynes to know beforehand what the causes for choosing variables and parameters are, and to identify whether they are measurable. This is true today when many researchers oftentimes lack manageable data. Another critique was that econometrics measures equations with path dependence and lags (explaining Xt as function of Xt-n), but it is obvious that a variable behavior is influenced by its past path.

The most important critique of econometrics is however, that Tinbergen conducted inductive generalizations (from X to Y). Keynes’s view - partly derived from Hume’s skepticism - was that no intermediate steps must be taken for granted. Keynes ascertained that observations must be scattered across subsequent periods, thus the analyst must consider both stable and unstable moments. The number of observations must be large, and the exogenous and endogenous variables (X and Y, respectively) must be identified by way of economic analysis. Tjalling Koopmans (1903-1985) provided in 1941 a more systematic logic of the methods employed, perhaps recognizing the role of the expectations and the state of confidence in macroeconomics. Trygve Haavelmo (1911-1999) eschewed many of these methodological problems in 1943. Thus, Keynes’s critique in his communication with Harrod (Keynes, 1938e) contended that generalizations are difficult to believe and that prediction is an uncertain task. This is the mathematical version of his phrase: In the long-period we all are dead. In Neo-classical models, econometrics relies on axioms and certainty. But no axioms exist in social science since phenomena are organic, according to Keynes. Uncertainty is absent in the IS-LM model, but also in conventional econometrics since the heterogeneity of variances might be present.

Uncertainty is also normally neglected as it breaks the Classical Gaussian statistical core9. Conversely, for Keynes errors are systematic and qualitative.

He conducted along the way a critical assessment of Edgeworth’s work, who attempted to quantify economic events in the way physics is mathematized. He also defined economic science as a mode of thought, which means that it is more than the advocacy for a manual comprised of rigid rules (Keynes, 1938e). However, Keynes conceived in GT his psychological consumption function ( ; where Y is output or income, and Ca is autonomous consumption) in mathematical and aprioristic terms, the marginal efficiency of capital in marginal terms and the money demand function in deterministic terms. Finally, Keynes required econometric assumptions to be precise. This vision arose from his attempts to unify theoretical and empirical approaches in terms of the struggle against unemployment and polarized cycles, leaving a message of epistemic and practical moderation, which is now outlined. The Epistemology of A Treatise on Probability (TP) (1921) This Section deepens Keynes’s conception of probability as a logical (objective) relationship between propositions rather than between numbers or events. The purpose is to demonstrate how Keynes’s core in statistics is different from the Classical. A Newer Conception of Probability Keynes wrote in TP that decisions do not rely on mathematical expectations. He departed from Hume’s claim that induction is an insufficient method for knowing something by departing from its premises. Thus, Keynes was involved in the study of the logical steps by connecting assumptions and implications in proposals. Keynes contributed to the foundation of logical probability coming from a morals source: he did not believe in Moore’s individual act-consequentialism. For Keynes’s, Moore conception had a bearing on choice, but one which must not be reduced to the calculation of quantities or the aprioristic expectation of results. Keynes believed in human logic rather than in formal logic (Fitzgibbons, 2001), so uncertainty and expectations were highly relevant in decision-making. In that sense he opposed the rational apriorism of both Descartes and Kant, the continental route from truth to cogency. Keynes would be on the side of the Locke Connection embedded in the usage of empiricism, but after giving a pre-eminent role to thoughts about options. Revisiting notions of Probability

The first but ultimate approaches to probability are the Classical and the frequentist, suggested by Jacob Bernoulli (1654-1705) and by Pierre Simon Laplace (1749-1827), both of them being based on the principle of insufficient reason. Events can happen without opposition, with almost 50 percent probability, and repetitions can occur under similar conditions.

No choices exist, since sampling is mechanical. In a distribution, numerical probabilities (1/n; n = number of events) approach the mean, and variances () are small and infrequent. The Classical economists believed in this scheme since for them the system is self-regulating. Both Classical visions assume homogeneity in events. The addition of probabilities of occurrence within a single event must amount to one. The second approach to probability was suggested by John Venn 12(1834-1923), who was not foreign to Keynes’s family. It is akin to the Classical view, but related to the limit of relative frequencies of occurrences of an event (for instance, in dices experiments). It results in a sampling that must approach 50-50%. This method is about probabilities between events rather than between numbers, being suitable to the physical world rather to an ideal platonic world as in the Classical approach. Events in this view are ergodic (repetitious) in Davidson’s terminology (Davidson, 200313). Enter Keynes Keynes would propose a third option. He criticized static standpoints in the examination of probability in social events. His perception of probability was purely logical at that moment (see Section 3). Keynes suggested the use of a non-additive and non-linear approach for measuring probability with a mathematical foundation, but it was not a mathematical relationship. Still he made probabilities function in a way that is fitting for decision making. A logical probability between propositions (hence related to human knowledge) is ‘frequently’ non-numerical, but ordinal. For Keynes, epistemic (objective) probability was at that moment associated with inductive and intuitive (subjective) probability. Keynes thus suggested a third approach: the logical-objective interpretation of probability. Here events exhibit asymmetries and heterogeneities arising from distinct objects and results of either observation or experimentation. That is, sampling is non repetitious in the sense that conditions are different in each experiment (as happens in the social sciences). Truth is independent of opinion. The idea for a decision-maker is to draw conclusions from premises, assuming strong grounds: the weight of evidence (to be explained below). Hume attacked induction by affirming that the number of samples in an experiment is finite, and hence it does not allow us to tell if a result is definitive at any given point, as subsequent observations or experiments might deny former truths. Keynes justified validity in statements by means of induction, but this would lead to overstated subjectivism, although he did not realize this at the beginning. He aimed to solve the problem of inability of inferences in induction by stating that probability possesses varying degrees of evidence. Thereafter Keynes contended that if evidence is augmented in any proposal, probabilities increase so that induction might be justifiable, albeit provisionally. Keynes would later include a subjective dimension (Section 3). At a later stage he would validate inferences by resorting to convention both in 1936 and 193814. This is the milieu of the notions on probability.

Deepening matters In Keynes’s logical initial approach, the roles of intuition, certainty, convention and context were relevant to examine the role of induction. However, for him, the cause-effect dichotomy was also related to both sensation and association. Hence no empirical reality could be the foundation of a universal law, since Hume affirmed that the relationship between cause and effect is the result of custom leading to the blind application of universal laws for the verifiability of results. Intuition is a faculty that must be followed by intelligence, for the sake of capturing the dynamics of organic unities. But induction is a-posteriori knowledge, being asymmetrical. Thus, the inductive method is akin to uncertainty. Probability theory was for Keynes based on degrees of belief as the tool for inductive logic. His probability thus was cardinal, wherein induction drove and made causal reasoning flexible. The process of reasoning was also based on analogy. Probability and expectations (based on organic unities and uncertainty) are interrelated. Keynes thus challenged Hume’s skepticism by ascertaining that the mind is active in perception, attempting to ground knowledge starting with probability. This is 1921 but the road was paved for eventually arriving at a partial subjective view of probability (Section 3). Returning to objectivism, the first step to obtain knowledge is to consider all events as probable so that the notion of probability must be widened. In his effort to embed the foundations of probability to logical prescriptions, he stated that probability must capture the degree of belief in a proposition, given inconclusive evidence. The second step was to destroy the applicability of the frequentist quantitative approach by contending that numbers do not explain the essence of propositions. Heuristic explanations based on numbers are only suitable for deterministic cases confined to closed systems. This was his reasoning process about human conduct under limited knowledge. The repercussion is that probability, the logical relationship between hypothesis and evidence, provides only partial veracity. He thus progressed from finding the truth to grounding knowledge, albeit only in method since knowledge always varies. Keynes versus Classical probability

Probability is hence the degree to which arguments are provisionally conclusive. Heretofore probability is visualized as non-deterministic. This is why functions in probability15 are different from those belonging to other statistical procedures such as hypothesis testing16.

But probability was also a degree of rational belief. Thus Keynes’s conception represented a step beyond both pure intuition and pure induction, since these two methods of knowledge are intrinsically aprioristic, despite their Kantian or Cartesian sophistications. In the basic relationship between hypothesis (premise “a”) and conclusion (evidence “h”), the issue is how to conduct a valid inference process, the so-called Humean problem. Keynes extended the reach of this process by asserting that all phenomena can add new information at every instant. Probability then is non-demonstrative, being thus correlated to uncertainty. TP explained the root of Keynes’s complex and fuzzy epistemology;he did not claim at that stage that universal induction yields certainty. Induction is the estimation of the validity of observations as evidence for a proposition. But since social science faces the problem of generalization from observations, a proposition can never be definitively demonstrated. Induction is only valid in a universe with finite probabilities, which is seldom the case in real life. Keynes initially advocated for intuitionist epistemology since he considered it more relevant to knowledge acquisition than Locke’s or Moore’s sense experience. Thus, he overcame the basic paradigms of understanding: the empiricist, wherein external coherence, post-interpretation, and open systems play a vital role; and the aprioristic, wherein internal coherence, pre-interpretation, closed systems, invariant laws, and analogies prevail as they do in the physical sciences. Further, in 1921 Keynes did not believe in the unsophisticated Benthamist utilitarian calculus, which is a form of action grounded in the Classical frequentist approach. In TP, Keynes contended that probabilities clarify how agents may conduct decision making under uncertainty, thereby criticizing Mill’s categorization of cardinal hedonism. His logical consideration became the benchmark for detecting the appropriateness of actions in an interdisciplinary context, but good choices must have an ethical background. For Moore common sense was grounded in certainty, while for Keynes it was based on probability. Hence, TP offers insights about the nature of the spontaneous actions conducted by animal spirits, who may avoid unexpected results by measuring logical probabilities. Keynes’s probability was thus linked to efficacy. Intuition bears the distinction of not being susceptible to proofs. But Keynes rejected illogical or inherited arguments and categories in TP. Instead, he placed individual judgment (discretion) at the core of decision making at the expense of rules. Moreover, judgments and beliefs based on probability must be connected to action, unlike in Moore’s metaphysical vision. Perhaps Moore considered that probability cannot be connected to applied knowledge due to the existence of certainty in closed systems. Additional views on Keynes’s notion of probability

According to Zappia (201217), TP attempts to avoid physicalism in knowledge and to give sense to moral principles. A second critique Keynes had of the frequentist approach is associated with the magnitude of the probability of the argument.

Information must be both efficiently obtained and processed, and the difference between a probability assessment and its degree of confidence cannot be found in conventional statistical approaches. Confidence is at a higher level of epistemic knowledge than frequency18. For Zappia (ibid.) the third part of Keynes’s criticism was his refusal to use mathematical expectations as they ignore the weight of evidence. Not all events possess the same level of frequency (fluctuate across experiments). Whenever information is vague the frequentist approach is inappropriate. Keynes relies on qualitative orders, wherein non-numerical probabilities (representing most events in real life) are analogous to probability weights. This heuristic understanding leads to moderation. For Zappia (2012), normal probabilities are represented by the formula: E = pA, whilst Keynes’s formula was: E = cA, where E = expectations; A = event; p = probability; q = non-probability; c = p/1+q. Thus, Keynes classified the maximization of expected utilities as a special case and as transient one. Further, expected value is a valid guide only when confidence is at a maximum.

Keynes thus employed degrees of belief in the place of what used to be called a-priori possibilities. Keynes went further because the degree of belief covers a spectrum rather than being restricted to the dual decision of the robotic world19, or to numbers.

Since the degree of belief assumes intensities of intentionality in a world dominated by uncertainty, his contentions in 1921 presupposed a flexible conception of both time and space. The ‘Apostle’ William Ernest Johnson (1858-1931) influenced Keynes with respect to inference. But Johnson assumed homogeneity among events and emphasized the relevance of calculus. He referred to exchangeable sequences of random variables, meaning that there only is a finite sequence of them, like in atomism. Obviously, this contention set limits to the use of independent and identically-distributed random variables ( σ is constant in an experiment) and the inductive hypothesis could fail. This procedure may provide invalid results in the presence of organicism and its elements: heterogeneity and asymmetry. In his youth, Keynes relied on the principle of indifference when designing the scheme of logical probabilities among proposals, but this was corrected when he considered the weight of evidence. People do not make mechanical choices. Under this scheme the rules of probability are logical deductions from one’s own perspective rather than from deterministic axioms in closed systems. To sum up, for Keynes proposals must be logically related in order to make sense under uncertainty. But humans do not always employ logic and something was still missing. He would turn to subjectivism. From that point forward he would be in favor of inter-subjective objectivism as explained in Section 3. A brief interlude Yet from another perspective Keynes’s notion of uncertainty is relevant in epistemic terms. A core is the philosophical basis of a school and defines its positive heuristics, both of which comprise his Scientific Research Program which can be either progressive or degenerative.

Keynes’s core in Lakatosian terms (Lakatos, 1974, 198320) claims reform by means of his system characterized by organicism, irrationality, animal spirits, qualitative analyses, non regulating systems, and a new definition of economics as the science of decision taking under uncertainty rather than under scarcity.

Returning to his letter to Harrod (Keynes, 1938e), there Keynes offered a contradictory definition of economics. Economics was both a branch of logic and a moral science. This part of Keynes’s core is compatible with both his modification of the notion of probability and rejection of the indiscriminate use of mathematics. Frank Plumper Ramsey (1903-1930) The contribution of the late ‘Apostle’ Frank P. Ramsey (1903-1930) to Keynes’s work on probability was vital. Ramsey convinced Keynes of the relevance of the subjective dimension for the selection of those criteria apt for decision making in an uncertain realm. Ramsey criticized Keynes’s purely logical -objective- approach to probability. This stance is considered a healthy underpinning of Keynes’s objective and subjective approach to probabilities calculus. Ramsey criticized Keynes’s Kantian-type logical probability relations, since even in the light of objective facts individuals may attribute different probabilities to distinct events. Ramsey was correct. Keynes however was right in realizing that probability is related to the logic of proposals. Since information is uncertain, all humans can do is being reasonable in an irrational world. But Keynes’s uncertain factors affecting human behavior might be captured by understanding the subjectivity embedded in probability choices. This was Ramsey's advice. For García Duarte (2007), Ramsey’s distinction between formal and human logic21 had an influence on Keynes. Ramsey was, arguably, more interested in perceptions than in evidence both as the origin and proof of knowledge. On reflection, Keynes accepted Ramsey’s subjective notion of probability in the weight of the argument, since even though objective knowledge was related to rational judgment, both introspection and values mattered as well. The individual mind was an organic unity for Keynes. But individual thought may still be receptive to other thoughts according to both inter-subjectivity and the principle of uniformity. Yet individuals are dissimilar in experience and circumstance generating unexpected behaviors and intentions as the result of events. Keynes hence questioned the theory of the representative individual in economics but accepted it as the unit of analysis in probability, epistemology and ethics in 1921. Keynes the eternal compromiser wondered again at this epoch what was the sense of the roles of intuition and induction. Eventually he embraced to a certain extent Ramsey’s subjective approach believing that logical associations of proposals deny the possibility that algorithms may represent the way in which human beings think beyond utility maximization. Human choices are qualitative, as exemplified by degrees of belief. Ramsey had written about uncertainty based on subjective probability when he was a member of the ‘Apostles’ (1921-1929). According to García Duarte (2007), Ramsey wrote his first criticism of TP in 1922 on philosophical judgment, based on Moore. For Ramsey, the best alternative to rigid mathematics was to gamble with expectation; in other words, he championed a subjective ex-post approach to probability. In reciprocity, Keynes explained to Ramsey the advantages of intuition. Radical uncertainty makes economies unstable and prevents them from rapid recoveries, because insufficient knowledge translates into a lack of efficacy, and the remedy is to rely on probability. Hence, for some writers the foundation of Keynes’s economic thinking was outlined in TP as a reaction against Moore’s notions of common sense, utilitarianism, rationality, and his implicit belief in frequentist probability. Keynes argued that the Classical and the frequentist approaches were non-applicable in a complex world. Keynes’s weight of the argument is backed in the theory of groups, which renders it inter-subjective. Finally, Ramsey recommended that mathematics needed not to be used at each and every opportunity. For him, the results delivered by numerical models must be simple, interesting and not obvious. He wrote that evidential weight, information and knowledge could arise at a reasonable price. Conclusion Keynes preached the rational use of mathematics, whereas his conception of probability was innovative. Although Keynes opposed the unrestricted use of econometrics as a general rule, he made that science to become more advanced through his creation of macroeconomics. The implication is that either unexamined econometric formulations or a-prioristical mathematical applied economics rests on shaky foundations in terms of unexamined applicability, not of rationality. Our contribution is that Keynes´s attitude to mathematics is the same to those he made to rationalism, individualism, fixed rules or logical time: he urged either reserve or moderation, and hence methodological reform. It is shown here that Keynes’s notions of probability, econometrics and mathematics reflect his Lakatosian core. Keynes’s notions are hereby linked with other events in Keynes’s life. The attempt to unify his philosophy may clarify his reform to the use of quantification. Some conventional uses of mathematics on the part of Keynes were highlighted here as an additional contribution, for example his marginal propensity to consume, but those were the exceptions confirming the rule. He might have undertaken this approach in GT for the sake of simplicity. The practical implication of this analysis is to use mathematics moderately and rely on observation, facts, logic, experience and economic theory before doing statistics. The ongoing debate about the relevance of mathematics in economics between Davidson and O’Donnell is whether the bases of ergodicity (non repetitious, discontinuous events) are ontological or epistemological. Our view is that Keynes´s view was epistemological, but both perspectives are interrelated. The debate about the use of mathematics is alive. For example, all stock exchanges quantify longitudinal behaviours, but ignore differences in financial penetration the social meaning of finance, or the weight of evidence. Finally most students of economics must cope with many courses on mathematics at the beginning of their careers. The advice is to do math, especially discrete mathematics, but not solely math. 1 Heisenberg, Werner. (1930). 1949. The Physical Principles of Quantum Theory, Hoyt F. C.: Dover.

2 O’Donnell, Rod (1889). Keynes, Philosophy and Economics (1989), Chapter 9. 3 Fitzgibbons, Athol (1988). Keynes’s Vision: A New Political Economy, Oxford: Oxford University Press. 4 In the view of most members of the ‘Locke Connection’ only what can be measured is useful for undertaking decisions. 5 Tily, Geoff (2009). “John Maynard Keynes and the development of national accounts in Britain, 1895-1941.” The Review of Income and Wealth 55(2), pp. 331-59. 6 Lawrence Klein (1920-2013) would claim that this empirical type of exercises aided to validate the Keynesian Revolution. 7 Locke believed like Newton in the existence of uniform and homogeneous movements. 8 Friedman was not concerned about assumptions but about results but was also against excessive formalism in economics. 9 This mistake is committed by conventional financers who do not distinguish between risk and uncertainty. 10 Bernoulli, Jacob (1713). Ars Conjectandi. Opus Phostumum, Basel: Thurnisii Frates. 11 Laplace, Pierre S. (1820) [1923]. Théorie Analytique des Probailités, Paris: Courier. 12 Venn, John (1866). The Logic of Chance, 1st ed., London and Cambridge: MacMillan. 13 Davidson, Paul (2003). “Is ‘mathematical science’ an oxymoron when used to describe economics”, JPKE. 14 It reflected a change of view as in ‘My Early Beliefs’ (1938), wherein he accepted traditional views. 15 Hence, Keynes advocates discretion in the use of policies, discarding (deterministic) rules. 16 Keynes is critical of statistics for its reliance on the interrelated premises of atomism in variables, data independence, random errors, and that the present is a continuation of the past. No Gaussian curve of probabilities exists for him. 17 Zappia, Carlo (2012). "Re-reading Keynes after the crisis: probability and decision," Department of Economics University of Siena 646. 18 Keynes still sustains in 1921 that individuals undertake personal choices, just like in the “atomist” Classical and Marshallian schools, without internalizing information on social conditions and preferences. 19 The black-and-white view of the world corresponds to either physical facts or paranoid minds. 20 Lakatos, Imre (1974) [1983]. La Metodología de los Programas Científicos, Madrid: Alianza Editorial. 21 It is captured in the notion of conventions for him since 1936. References

García-Duarte, P. 2014. Frank Ramsey (October 7, 2014). The Palgrave Companion to Cambridge Economics, (forthcoming). Available at SSRN: https://ssrn.com/abstract02506786. Keynes, J.M. (1921) 2004. A Treatise on Probability, NY: Dover Publications. ISBN 978-0-486-49580-4. Keynes, J.M. (1921) 2015. A Treatise on Probability. In The Essential Keynes, introduced and edited by Robert Skidelsky, Chapter 5, London: Penguin. Keynes, J.M. 1940. How to Pay for the War. Digital Libray of India Item 2015.49957, archive.org.detail. Keynes, J.M. 2007. “Professor Tinbergen’s method,” Voprossy Economiki, N. P. Redakstya zhurnala “Voporosy Economiki”, vol. 4. Keynes, J.M. (1904). 2015. “The adding-up problem” In The Essential Keynes, introduced and edited by Robert Skidelsky, Chapter 3, London: Penguin. Keynes, J.M. (1908). 2015. “The principles of probability” KP 20D. In The Essential Keynes, introduced and edited by Robert Skidelsky, Chapter 3, London: Penguin. |